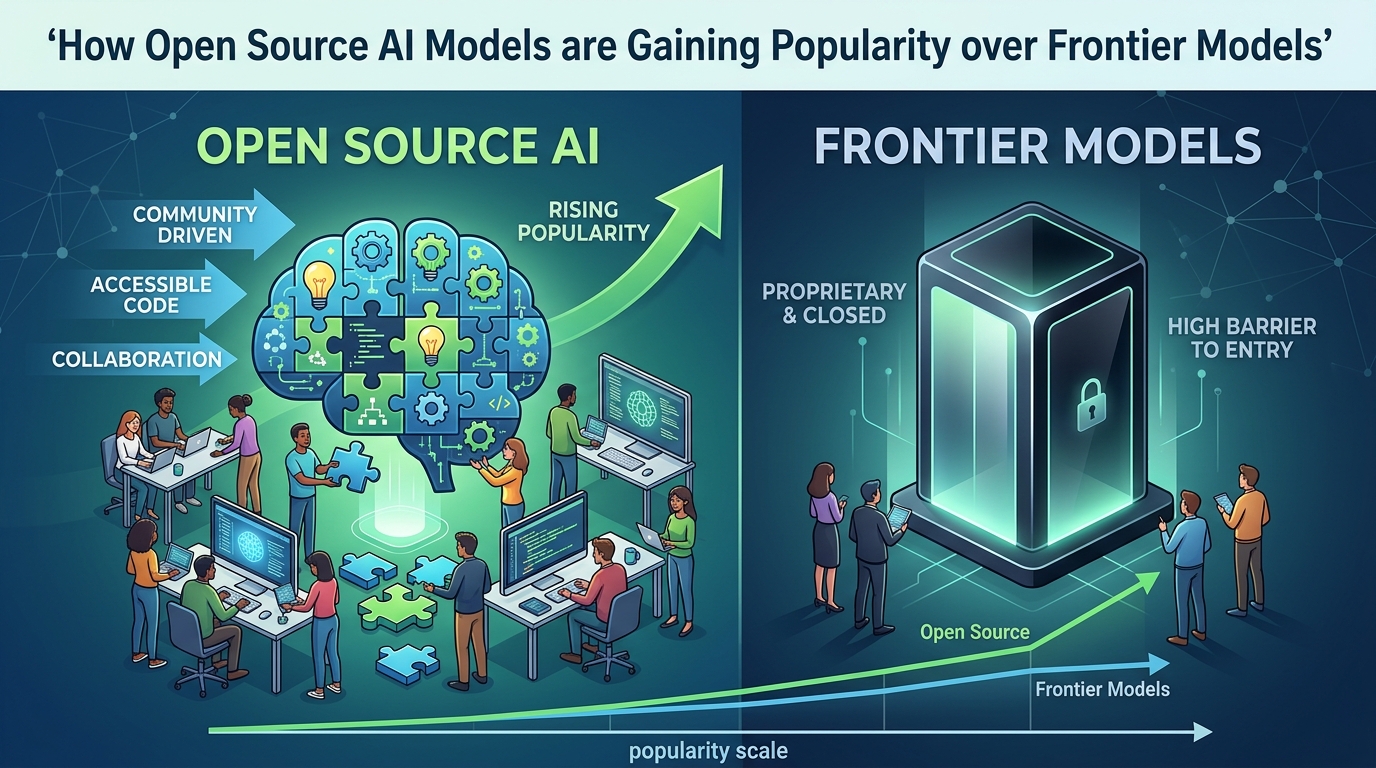

How Open Source AI Models are Gaining Popularity over Frontier Models

Open-source AI models aren’t replacing frontier models everywhere, but they’re quickly becoming the go-to choice for more and more real-world AI work. The shift is happening because the quality gap has gotten smaller, the cost difference has gotten bigger, and organizations increasingly want more say over privacy, deployment, customization, and long-term platform risk. Recent data from the Stanford AI Index, Databricks enterprise data, GitHub Octoverse data, Meta Llama downloads, and Hugging Face trends helps explain why open models are picking up steam so fast.

Open Models Are Winning Because the Performance Gap Has Narrowed

For most of the generative AI boom, frontier models had a clear edge in quality. That’s still true at the very top end when it comes to reasoning and multimodal capability. But how big that lead is has changed dramatically. The Stanford AI Index shows the gap between the leading closed-weight model and the leading open-weight model on the Chatbot Arena leaderboard shrank from 8.04% in early January 2024 to just 1.70% by February 2025. That’s a huge shift in a really short time.

The same report also shows competition at the top has gotten tighter overall. The difference between the top and 10th-ranked model on Chatbot Arena dropped from 11.9% to 5.4%, while the gap between the top two models fell from 4.9% to 0.7%, according to the Stanford performance data. Put simply, AI quality is getting more competitive across the board. Once the lead gets small enough, buyers start caring less about who’s technically number one and more about control, operating cost, latency, and how easy it is to integrate. That’s where open models start looking a lot more appealing.

This doesn’t mean frontier labs have stopped leading. Stanford points out that newer reasoning approaches can still push proprietary systems much higher on difficult tasks. In one example from the same Stanford report, OpenAI’s o1 scored 74.4% on an International Mathematical Olympiad qualifying exam compared with 9.3% for GPT-4o, but the catch was that o1 was nearly six times more expensive and 30 times slower. That trade-off matters. The best model isn’t always the most practical one.

Cost Has Become One of the Biggest Reasons Open Models Are Rising

The economics of AI have shifted fast. Stanford reports that the cost of querying a model with GPT-3.5-level performance dropped from $20 per million tokens in November 2022 to just $0.07 per million tokens by October 2024—a drop of more than 280 times, according to the AI cost chart. The same research also says hardware costs have been falling by roughly 30% per year while energy efficiency has improved by about 40% annually, based on the 2025 AI Index.

Those trends work in favor of open-source and open-weight models because they make self-hosting, fine-tuning, and task-specific deployment way more practical than they were even a year ago. Small and mid-sized models can now deliver acceptable performance for internal copilots, document search, support automation, coding assistants, knowledge bots, edge inference, and structured workflows without needing frontier-model pricing.

That’s also one reason the market reacted so strongly to cheaper open alternatives. Reuters reported that DeepSeek said its R1 model cost only $294,000 to train, based on a peer-reviewed paper described by Reuters reporting. Even with debate around the full accounting behind such claims, the broader message was clear: high-impact reasoning models no longer look like something only a few U.S. frontier labs can produce at viable quality. That perception shift matters almost as much as the number itself.

Enterprises Are Choosing Open Models for Control, Not Just Price

Cost alone doesn’t explain the surge in popularity. Many organizations prefer open models because they offer operational control. Companies can run them in their own cloud, on-premises, or at the edge; audit inputs and outputs more closely; combine them with proprietary datasets; and reduce dependence on a single vendor’s API roadmap or pricing decisions.

You can see this in adoption data. Databricks says that, among companies using large language models, 76% are choosing open-source models, often alongside proprietary ones, according to its enterprise adoption report. The same report says organizations put 11 times more AI models into production year over year and that 70% of companies using generative AI are using tools such as vector databases and retrieval systems to ground models with private data, based on the Databricks survey data.

That combination matters. Once a business is already building retrieval-augmented generation, domain tuning, and internal workflow automation, the value of owning the model layer goes up. Open models fit that architecture well because they can be customized and deployed where the data lives. Mistral makes this point directly in its enterprise positioning, saying customers can customize, fine-tune, and run models “anywhere,” according to the company’s enterprise model page.

For regulated industries, the appeal is even stronger. Databricks says highly regulated sectors such as financial services and healthcare are among the fastest adopters of AI, and that preference is tied to governance, data sovereignty, and deployment control in its industry usage findings. In those environments, being able to keep model execution closer to internal systems isn’t a side benefit. It’s often the deciding factor.

The Ecosystem Around Open Models Is Growing Faster Than a Single Vendor Stack

Popularity isn’t measured only by model benchmarks. It also shows up in developer behavior, repository growth, tooling maturity, and deployment infrastructure. That’s where open AI has built a powerful flywheel.

GitHub’s data shows how large that flywheel has become. Microsoft’s summary of GitHub’s Octoverse 2025 says GitHub now has more than 180 million developers, 1.12 billion contributions to public and open-source repositories, and more than 4.3 million AI-related repositories, nearly double since 2023, based on the GitHub ecosystem stats. That’s not just growth in AI usage. It’s growth in collaborative AI building.

Even more telling is where the open-source momentum is concentrating. GitHub says six of the ten fastest-growing open-source projects by contributors in 2025 were directly focused on AI infrastructure or tooling, according to the Octoverse tooling analysis. Projects such as vLLM, SGLang, RAGFlow, Continue, ComfyUI, and Ollama matter because they make open models easier to serve, optimize, compose, and integrate. In practice, many companies aren’t choosing between one model and another. They’re choosing between ecosystems. Right now, the open ecosystem is expanding faster in deployment tooling than any single proprietary platform can.

That same GitHub analysis also found that nearly half of all new AI projects on GitHub in 2025 were built primarily in Python, based on the Python project data. Python has become the common layer for training, fine-tuning, inference orchestration, evaluation, and agent workflows. Open models plug naturally into that world.

Downloads and Model Releases Show Real Market Pull

The strongest sign of popularity is repeated use. Meta announced in March 2025 that Llama had passed one billion downloads, according to the company’s official Llama update. That figure doesn’t mean one billion production deployments, but it does show enormous developer and enterprise demand for accessible, modifiable model weights.

Hugging Face’s latest ecosystem review points in the same direction. The company says that in 2025 the majority of trending newly created models were either developed in China or derived from a model developed in China, and that organizations that previously leaned closed shifted decisively toward open releases, according to the Spring 2026 review. It also notes that individual users, not just large labs, are now among the notable sources of competitive trending models, based on the Hugging Face data.

That matters because popularity in AI increasingly comes from accessibility. When smaller companies, public institutions, universities, startups, and individual builders can meaningfully participate in the model ecosystem, attention shifts away from a handful of frontier APIs toward a broader, more distributed stack. Open models benefit from that distribution by design.

Open Models Also Fit the Sovereignty and Compliance Story

Another reason open models are gaining popularity is national and organizational sovereignty. Governments and enterprises increasingly want models they can inspect, adapt, and run under local legal frameworks. Hugging Face explicitly connects open-weight models to sovereignty, arguing that they let governments and public institutions fine-tune systems on local data and deploy them on domestic infrastructure, based on the sovereignty discussion.

There’s also a definitional shift happening here. The Open Source Initiative now distinguishes true open-source AI from merely open-weight releases. Under the OSI AI definition, an open-source AI system should provide the freedoms to use, study, modify, and share, along with access to the preferred form for modification, including data information, code, and parameters. That matters because many widely used “open-source” models are better described as open-weight or source-available rather than fully open-source in the software sense.

Even so, that licensing nuance hasn’t slowed adoption. In the market, “open enough to inspect, fine-tune, and deploy yourself” is already compelling. For many buyers, that level of openness is sufficient to make an open model more attractive than a black-box frontier API.

Why Frontier Models Still Matter

It would be wrong to claim that open models have already beaten frontier models across the board. Frontier labs still tend to lead in the hardest reasoning tasks, advanced multimodal performance, and integrated product experiences. They also move fast in areas such as large context windows, tool use, safety layers, and state-of-the-art agent behaviors.

But the market doesn’t reward raw intelligence alone. It rewards useful intelligence at an acceptable cost and risk profile. That’s why open models are gaining popularity even while frontier models often remain benchmark leaders. For many organizations, especially those shipping production systems at scale, the decisive questions are practical: Can the model be tuned? Can it run where the data is? Can costs be controlled? Can latency be optimized? Can the stack remain portable over time?

Open models answer those questions better than frontier APIs in a growing number of cases.

Final Take

Open-source and open-weight AI models are gaining popularity over frontier models because the market is maturing. As quality gaps narrow, decisions increasingly shift from “Who has the smartest model?” to “Which model gives the best balance of performance, cost, control, and deployability?” On that broader scorecard, open models have become much more competitive.

The current evidence supports that conclusion. The Stanford AI Index shows open-weight models nearly closing the quality gap. Stanford cost data shows inference becoming radically cheaper. Databricks survey results show enterprise preference tilting toward open models. GitHub trend analysis shows open AI tooling compounding its lead in developer mindshare. Meta’s download milestone and Hugging Face ecosystem data show broad demand and growing geographic diversification.

So the real story isn’t that frontier models are disappearing. It’s that open models are becoming good enough, cheap enough, and flexible enough to win a much larger share of practical AI adoption.