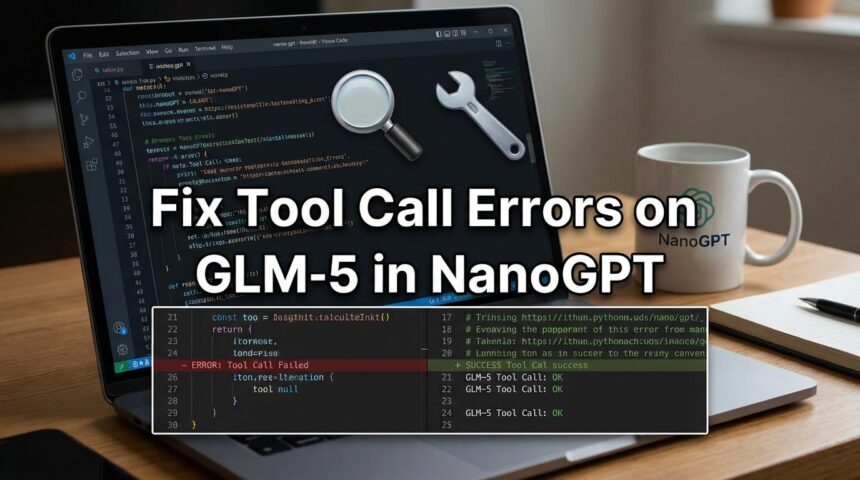

How to Fix GLM-5 Tool Calling Errors in NanoGPT

If you have ever tried to integrate the massive GLM-5 model into a minimalist framework like NanoGPT, you’ve probably encountered the frustrating “tool call serialization error.” This issue often manifests as malformed XML, infinite parsing loops, or JSON that simply won’t decode. The good news is that these aren’t signs of a “broken” model; they are predictable friction points between a complex agentic model and a simple generation loop—and they are entirely fixable.

Why Does This Happen? (Quick Answers)

- Format Mismatch: GLM-5 natively speaks XML for tools, but many simple parsers expect JSON.

- “Thinking” Process: The model outputs a continuous “chain of thought” before the actual tool call, confusing standard parsers.

- Double Escaping: Naive scripts often mishandle special characters (like newlines) inside arguments, corrupting the data.

- Loop Continuity: NanoGPT’s simple loop doesn’t know when to “pause” generation to execute code, leading to run-on text.

The Core Problem: Complexity vs. Simplicity

To understand the fix, you have to understand the mismatch. NanoGPT is built for transparency and simplicity—it generates text in one long, continuous stream. GLM-5, on the other hand, is an “Agentic” model designed for complex workflows. It doesn’t just talk; it plans.

GLM-5 uses a Dual-Stream Architecture. Before it executes a tool (like checking the weather), it generates “reasoning content”—an internal monologue. In a professional serving engine (like vLLM), these streams are separated automatically. In a raw NanoGPT loop, they get mixed together.

Additionally, GLM-5 uses an XML schema for tool calls, not the JSON format most developers are used to. A typical call looks like this:

<tool_call>get_weather<arg_key>location</arg_key><arg_value>San Francisco</arg_value></tool_call>

When NanoGPT treats this structural data as just “more text,” parsing errors are inevitable.

Tier 1: Quick Fixes (Prompting & Config)

These are the easiest solutions to try first. You usually don’t need to change the underlying code, just how you talk to the model.

1. Disable the “Thinking” Phase

The internal monologue is often what breaks the parser. If you don’t need the model to “show its work,” turn it off.

- In your inference arguments or API wrapper, set

enable_thinkingto False. - This forces the model to skip the speculative text and output the XML payload directly.

2. Enforce the XML Contract

Don’t assume the model knows what format you want. Add a strict “Output Contract” at the very end of your system prompt:

- Explicitly state: “You must output function names and arguments strictly within

<tool_call>tags.” - Provide the pattern: Show the model exactly how

<arg_key>and<arg_value>should look. - Forbid extra text: Add “Do not include conversational prose outside these tags.”

3. Adjust Temperature

The clearer the signal, the better the syntax.

- Lower your temperature to 0.1.

- This forces deterministic sampling, making it much less likely the model will “get creative” with XML tags.

Tier 2: The Middleware Fix (Regex Parsing)

If prompt engineering isn’t enough, you need to upgrade how your script reads the model’s output. The standard Python re (regex) library often fails here because it doesn’t handle newlines by default.

How to Build a Better Parser

You need a parser that acts as “middleware”—intercepting the text before your application tries to use it.

- Enable DOTALL: When writing your regex to find

<tool_call>, you must use there.DOTALLflag. This tells Python that a “dot” (.) can match newlines. Without this, multi-line arguments (like code blocks) will break your parser. - Don’t Re-Serialize: A common bug involves “double serialization.” If the model outputs a string that looks like JSON, don’t blindly run

json.dumps()on it again. This creates “double-escaped” strings (turning"into\") that are impossible to read later. - Verify Before Execution: Extract the

<arg_value>, sanitize it (remove accidental trailing commas), and then attempt to parse it.

Tier 3: Advanced Options (System Architecture)

If you are building a production tool or need 100% reliability, you might need to touch the generation loop itself.

Constrained Decoding (Logit Masking)

This is the most robust technical fix. Instead of fixing errors after they happen, you prevent them from happening at all.

- This involves using a Finite State Machine (FSM).

- As the model generates tokens, the FSM checks the XML schema.

- If the model tries to generate a token that breaks the schema (like a random word where a closing tag should be), the system sets the probability of that token to 0.

- Libraries like Outlines or XGrammar can help integrate this into a Python loop.

Low-Rank Adaptation (LoRA)

If the model simply refuses to follow your format, you can fine-tune it using LoRA.

- Create a dataset of prompts and perfect XML responses.

- Crucial Step: When training, mask the loss function so you only calculate error on the tool call tokens, not the user prompt.

- This forces the model to focus 100% of its learning capacity on mastering the syntax structure.

What NOT to Do

- Don’t ignore the

re.DOTALLflag: This is the #1 reason parsing fails on long text. - Don’t parse the “Thinking” stream: If you keep reasoning enabled, ensure your regex looks only for text after the

/nothinktoken. - Don’t use aggressive quantization: If you squash GLM-5 down to Q4 or lower, its ability to write strict syntax degrades rapidly. Stick to higher precision if possible.

Conclusion

Integrating GLM-5 into NanoGPT effectively requires bridging the gap between a complex agent and a simple script. Most issues can be solved by disabling the thinking step and fixing your regex flags. If stability is critical, implementing constrained decoding provides a mathematical guarantee that your tool calls will be valid every single time.